Can You Trust Your AI?

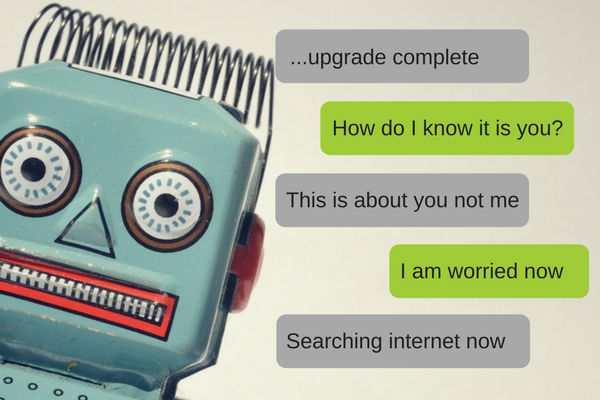

AIs are used for a multitude of purposes including data review and collection. Not all of these will be mundane either as AIs become more commonplace they will deal with important data such as criminal tracking, medical data, or tracking airline routes. No matter what they are used for AIs have to work effectively and above all produce factually correct information. If an AI is updated or the data it reviews is changed how will you know if the results are trustworthy?

As AI become more widespread human interaction will still be an important part of the overall process. While AIs may take over certain aspects of the work people will still need to review performance, test AI results, and restore older AI software installations if an update goes wrong. Regular testing will become very important as AIs become involved with more ‘mission critical’ industries. One of the best ways to spot an issue before it becomes a widespread problem with regular testing.

Human Interaction With AIs and How To Tell A Good AI From A Bad One

AIs are like any other important computer system and require regular testing and benchmarking. AIs are result driven as they are normally used to review data sets, process information, and handle mundane tasks. To test results consider the following ideas and test methodology.

- Consistency: one of the first tests you should perform with an AI is the consistency of its results. Running the exact same data set should produce the same results every single time. This also holds true if inconsequential data that does not impact results is changed. If your AI is producing multiple results with the same data set or overreacting to inconsequential data an issue is apparent.

- Logic: the purpose of an AI is to replace work a human would normally be performing. Therefore the results your AI generates should be ones a person would logically conclude given the same data set. Now certainly depending on your source material a range of results may be possible, however, completely illogical or factually untrue results should not occur.

- Ability To Handle Variables: a functional AI should be able to handle variables. When testing this pay attention to the results. A minor change in most cases should not completely invalidate previous results. If your AI is generating wildly contradictory results with minor changes to its overall data set something is likely wrong.

Specific AI Issues

Aside from the above noted generalised testing you have to be aware of the specific issues AIs can encounter as a unique platform and how you should focus your testing accordingly. It is a learning system that grows, adapts, and changes. However, this has a downside as not all the things an AI learns are good or even useful. A recent example of this was a Microsoft Twitter Bot that became corrupted by user input and started making racist statements. When testing AI you’ll want to note positive results but negative ones can also be highly informative. If an AI was reporting 2+2=4 and then started reporting 2+2=6 why this change has occurred is important to know. Also as a learning system an AI may produce results that were never originally considered and these results are highly valuable and should be carefully reviewed for new insights.

Final Thoughts

As the above shows the best way to test AIs is to be aware. Such cutting edge technology requires a certain amount of open mindedness and a careful review of not only results but of the AI ‘thinking’ that created them.